- Home

- Services

- Secure Digitalisation

- AI Assurance

- Home

- Services

- Secure Digitalisation

- AI Assurance

AI Assurance | Secure & Trustworthy Artifical Intelligence

What Organisations Need to Trust AI

Organisations need reliable evidence of whether an AI system is trustworthy and future-proof, under which conditions it can be deployed, and how uncertainties, errors or operational changes should be managed.

AI Assurance − Our Approach

AI Assurance is a structured approach to the assessment, assurance and verification of AI systems in real-world operation. It provides the foundation for making informed decisions about the deployment of AI by creating transparency around risks and demonstrating the trustworthiness of a system in a traceable and verifiable manner.

The focus is not solely on the model itself, but on the actual behaviour of the AI system in interaction with data, processes, people and organisations. We assess AI systems consistently within their specific operational context, combining technical evaluation, organisational integration and regulatory requirements into one integrated overall assessment..

How we put AI Assurance into Practice

AI Assurance is not a one-off test, but a structured, lifecycle-oriented process. Its goal is to identify risks at an early stage, define clear requirements and systematically demonstrate the trustworthiness of an AI system.

Our approach consists of five key steps:

Context & Risk Analysis

We analyse the operational context, risks and relevant boundary conditions, including regulatory requirements, standards and domain-specific constraints. These are consolidated into one consistent set of requirements.

Your benefit:

- Transparency regarding real operating conditions

- Early identification of critical risks

- A clear basis for informed decision-making

Deriving Requirements

Based on the operational context, we define concrete and verifiable requirements, including metrics, thresholds and release criteria. These are operationalised in a way that makes them both technically testable and organisationally applicable.

Your benefit:

- Clear criteria instead of abstract principles

- Traceable foundations for decision-making

- Structured guidance for development, assessment and release processes

Technical Assessment

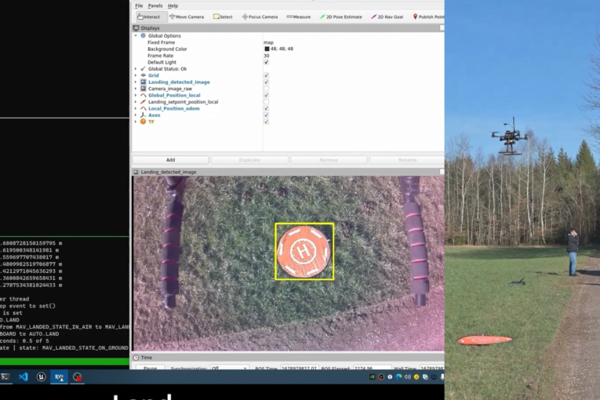

AI systems are systematically assessed − from simulations and controlled test environments to realistic operational scenarios. We deliberately analyse rare and critical situations, as well as system behaviour under uncertainty.

Your benefit:

- Reliable insights into robustness and performance limits

- Early identification of vulnerabilities and risks

- Testable and auditable evidencen und Risiken

- Prüf- und auditierbare Nachweise

Assurance Documentation & Release Support

All results are structured and translated into auditable evidence. At the same time, we support release and alignment processes with internal and external stakeholders.

Depending on the outcome, this may result in:

- Full approval

- Conditional approval

- Concrete action plans for risk reduction

Your benefit:

- Confidence in audit and certification processes

- Clear communication with decision-makers and assessors

- Reduced effort for rework and coordination

Operations, Monitoring & Continuous Improvement

AI Assurance does not end with the initial assessment. Even after release, the system remains under continuous review: we monitor system behaviour, detect changes and perform targeted re-evaluations. This ensures that AI systems remain reliable, traceable and responsibly deployable over the long term.

Your benefit:

- Stable and controlled long-term operation

- Early detection of issues during live operation

- Sustainable assurance of trustworthiness

AI in the Context of Complex Integrated Systems

AI systems are often deployed as part of complex cyber-physical and mechatronic systems. For this reason, we do not consider AI Assurance in isolation, but always in interaction with sensors, software, hardware, people and operational processes.

Your Benefit

AI Assurance provides the foundation not only for technically assessing AI systems, but also for making informed decisions about their deployment. Risks become visible at an early stage, requirements remain traceable and evidence becomes robust − even in complex and regulated environments.

- Informed decisions on AI deployment

AI systems are not assessed in isolation, but within their specific operational context − providing a reliable basis for release and operational decisions. - Early and targeted risk management

Risks arising from the interaction between models, data and operational conditions are identified at an early stage − before they become critical in live operation. - Verifiable compliance and regulatory confidence

Requirements derived from standards and regulation are translated into concrete, testable criteria and auditable evidence. - Realistic assessment instead of isolated test resultsWhat matters is not only model performance, but the actual behaviour of a system under realistic operating conditions.

- Stable and future-proof operation

Monitoring, re-evaluation and continuous improvement support a controlled and sustainable long-term deployment of AI systems.

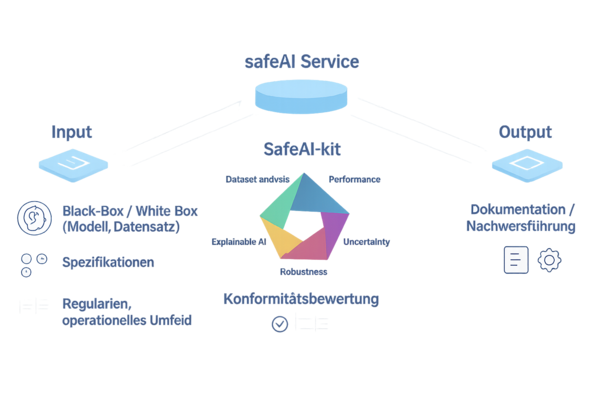

To ensure that AI Assurance goes beyond abstract principles, we translate requirements into concrete assessment and verification procedures. The safeAI Kit supports this process as a modular toolbox for technical analysis, evidence generation and monitoring.

safeAI-Kit: Our Tool-Kit for Safe AI

The safeAI Kit combines standardised methods, established best practices and proprietary approaches to systematically assess key characteristics such as robustness, uncertainty, explainability and system limitations. Our methodological work is based not only on practical experience, but also on the active development of standards and guidelines. This creates robust and traceable evidence that is both technically sound and suitable for decision-making and audits.

What does this mean for you?

- Consistent and traceable assessments

Standardised and reusable assessment modules ensure that results remain comparable and transparent. - Robust insights instead of isolated tests

By combining different methods, we enable a well-founded assessment of actual system behaviour − beyond isolated individual tests. - Efficient implementation of AI Assurance

Modular building blocks reduce effort and enable structured, scalable assessment and verification processes. - Support throughout the entire lifecycle

The safeAI Kit supports not only initial assessments, but also monitoring, drift detection and continuous re-evaluation during operation.

Our Expertise in Standardisation and Regulation

We combine current insights from AI research, standardisation and regulation with many years of experience in testing, analysis and certification processes.

Through our active participation in national, European and international standardisation committees, we contribute to the further development of requirements and assessment approaches for AI systems.

With the initiation of DIN SPEC 92005 on the “Quantification of Uncertainties in Machine Learning”, we have made a concrete contribution in this field. This work also served as the foundation for the ISO/IEC standardisation project SO/IEC TS 25223 on uncertainties in AI systems. The standard is expected to be developed under IABG leadership until early 2028.

You can also read the interview with our standardisation expert Dr Lukas Höhndorf: “Normung macht den AI Act greifbar” (DIN e.V., 11 December 2025).

AI Systems.

How can we help you?

Please fill in the form and we will get in touch with you as soon as possible.

This section contains third-party content that you can view with a single click.

By loading the form, personal data may be transmitted to the third-party provider. You can find more information in our privacy policy